Drones have been given ‘eyes’ and a new algorithm to help them fly intelligently, reaching their target position when GPS is not available.

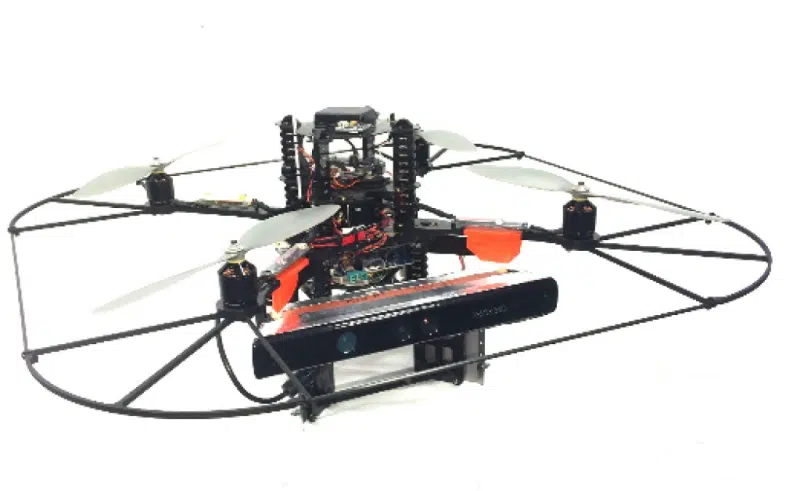

Dr Jiefei Wang, a researcher from UNSW Canberra Trusted Autonomy Group, used an Xbox Kinect sensor as an input camera to help drones ‘see’ their environment.

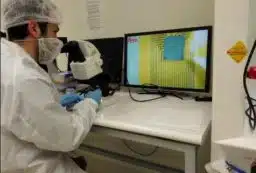

Jiefei developed algorithms to process the video footage image by image, to help the drones know their own speed, motion, and to detect obstacles so they can reach their target position—a completely autonomous system.

“Depth information is crucial for locating objects,” Jiefei says.

“Human beings can use one eye to see the world but need two eyes to locate. For example, try closing one eye, then point your index fingers towards each other and bring them together. Most people will find this difficult.”

Jiefei’s algorithm uses the images the drone ‘sees’ and compares the same pixel in one image with the previous one to find the difference. Detecting the differences in 2D images allows it to then calculate the speed and location of drones in 3D space.

“As the RGB-D cameras (such as the Kinect sensor) are still in their infancy, they still suffer from performance drawbacks such as limited operational range and relatively low resolution,” Jiefei says.

“So, in the future, the impact of more accurate RGB-D cameras could be explored. If I have the chance in the future, I would like to come back to this. I cannot stop the wars and disasters, but as an engineer I believe in the power of technology, and we are experiencing the way how it changes our lives right now.

“In future, we may be able to use the drones to help rescue people from earthquakes, help mining industries with underground detection without risking lives, and more.”

Banner image: Jiefei has created a system for the drones to become completely autonomous. Credit: Jiefei Wang

Fresh Science is on hold for 2022. We will be back in 2023.

Fresh Science is on hold for 2022. We will be back in 2023.